Token Security advances AI agent protection with intent-based controls

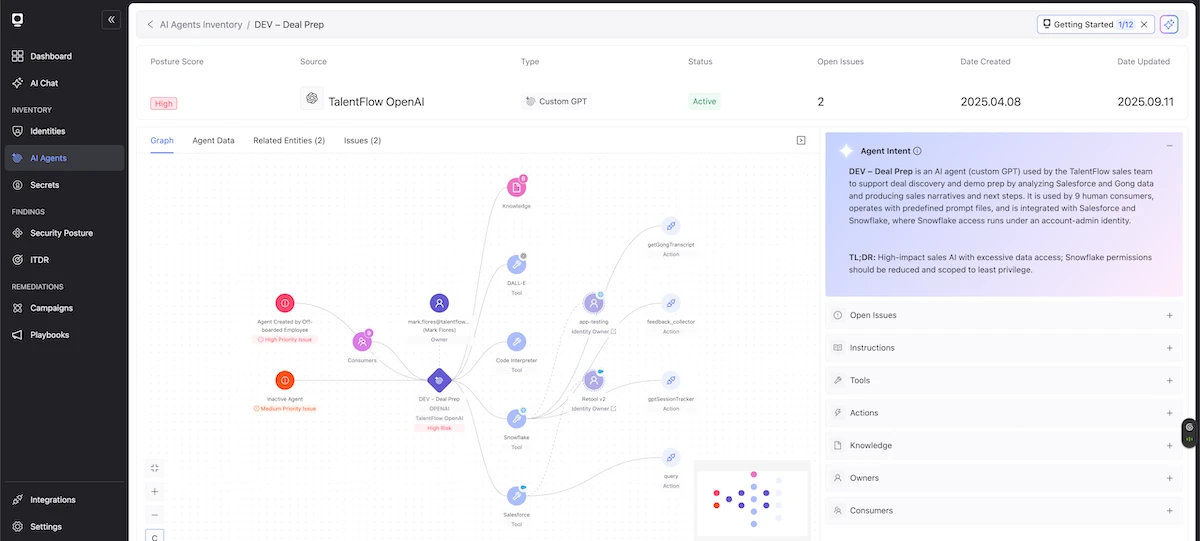

Token Security has unveiled intent-based AI agent security, a new approach that governs autonomous agents in enterprise environments by aligning their permissions with their intended purpose.

As organizations deploy autonomous AI agents across enterprise infrastructure, security models are struggling to contain the risks. Token Security has been advancing the concept of intent-based security for AI agents, and using identity as the control plane for governing autonomous systems. Because AI agents interact with enterprise systems through service accounts, API credentials, and cloud roles, identity controls are a natural enforcement layer for governing what agents can access and execute.

“Prompt filtering and guardrails were not designed to fully contain the security risks introduced by autonomous AI agents,” said Itamar Apelblat, CEO of Token Security. “With our intent-based approach, the Token Security platform understands what AI agents are supposed to do and ensures they only have the permissions required to achieve their specified goals. As soon as their intent changes or they demonstrate risky behavior, our solution automatically intervenes to neutralize the threat.”

From guardrails to intent-based security

Since AI agents are non-deterministic and goal-oriented, two agents with identical permissions can behave very differently depending on what they are trying to accomplish. This unpredictable nature limits the ability of static permissions, inherited human roles, or past behavior to contain agent security risks.

Token Security’s intent-based AI agent security introduces a new enforcement model that extends beyond prompt filtering and static policy-based controls to enforce dynamic authorization. The Token Security platform operationalizes intent-based AI agent security through five core capabilities:

- Continuously discovering AI agents, their owners, and their access

- Understanding declared and observed agent intent to decipher what they are designed and scoped to do

- Dynamically creating and enforcing least privilege access policies aligned to defined intent

- Flagging and constraining actions that fall outside established intent boundaries

- Applying lifecycle governance controls to prevent access drift and orphaned agents

“Intent is the missing dimension in AI agent security, since security teams must understand what an agent is designed to accomplish before they can safely govern what it can access,” added Ido Shlomo, CTO of Token Security. “AI agents shouldn’t inherit the full permissions of the humans who create them. When they do, organizations lose visibility and control over what those systems can access and execute. By understanding what an agent is designed to do and enforcing access based on its stated purpose, organizations can keep autonomous systems operating within safe boundaries.”