Confluent introduces several new capabilities to optimize data streaming performance across the entire business

Confluent announced several new capabilities as part of its Q2 ‘22 launch that deliver granular security, enterprise-wide observability, and mission-critical reliability at scale.

These capabilities are increasingly important as more businesses bring their data streaming workloads to the cloud to avoid the operational overhead of infrastructure management.

“Every company is in a race to transform their business and take advantage of the simplicity of cloud computing,” said Ganesh Srinivasan, Chief Product Officer, Confluent. “However, migrating to the cloud often comes with tradeoffs on security, monitoring insights, and uptime guarantees. With this launch, we make it possible to achieve those fundamental requirements without added complexity, so organizations can start innovating in the cloud faster.”

New role-based access controls (RBAC) enable granular permissions on the data plane level to ensure data compliance and privacy at scale

Data security is paramount in any organization, especially when migrating to public clouds. To operate efficiently and securely, organizations need to ensure the right people have access to only the right data. However, controlling access to sensitive data all the way down to individual Apache Kafka topics takes significant time and resources because of the complex scripts needed to manually set permissions.

Last year, Confluent introduced RBAC for Confluent Cloud, enabling customers to streamline this process for critical resources like production environments, sensitive clusters, and billing details, and making role-based permissions as simple as clicking a button. With this launch, RBAC now covers access to individual Kafka resources including topics, consumer groups, and transactional IDs. It allows organizations to set clear roles and responsibilities for administrators, operators, and developers, allowing them to access only the data specifically required for their jobs on both data and control planes.

“Here at Neon we have many teams with different roles and business contexts that use Confluent Cloud,” said Thiago Pereira de Souza, Senior IT Engineer, Neon. “Therefore, our environment needs a high level of security to support these different needs. With RBAC, we can isolate data for access only to people who really need to access it, and operations to people who really need to do it. The result is more security and less chance of failure and data leakage, reducing the scope of action.”

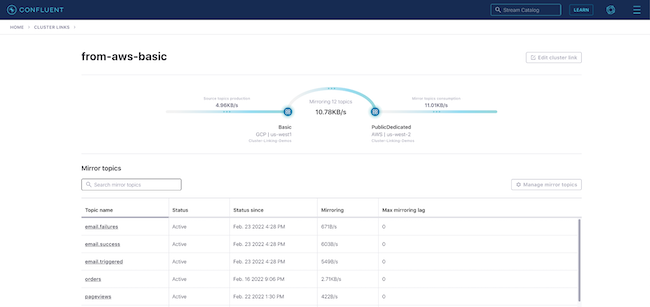

Expanded Confluent Cloud Metrics API delivers enterprise-wide observability to optimize data streaming performance across the entire business

Businesses need a strong understanding of their IT stack to effectively deliver high-quality services their customers demand while efficiently managing operating costs. The Confluent Cloud Metrics API already provides the easiest and fastest way for customers to understand their usage and performance across the platform.

Confluent is introducing two new insights for even greater visibility into data streaming deployments, alongside an expansion to our third-party monitoring integrations to ensure these critical metrics are available wherever they are needed:

- Customers can now easily understand organizational usage of data streams across their business and sub-divisions to see where and how resources are used. This capability is particularly important to enterprises that are expanding their use of data streaming and need to manage internal chargebacks by business unit. Additionally, it helps teams to identify where resources are being over or underutilized, down to the level of an individual user, in order to optimize resource allocation and improve cost savings.

- New capabilities for consumer lag monitoring help organizations ensure their mission-critical services are always meeting customer expectations. With real-time insights, customers are able to identify hotspots in their data pipelines and can easily identify where resources need to be scaled to avoid an incident before it occurs. Additionally, with records exposed as a time series, teams are equipped to make informed decisions based upon deep historical context when setting or adjusting SLOs.

- A new, first-class integration with Grafana Cloud gives customers deep visibility into Confluent Cloud from within the monitoring tool they already use. Along with recently announced integrations, this update allows businesses to monitor their data streams directly alongside the rest of their technology stack through their service of choice.

To enable easy and cost-effective integration of more data from high-value systems, Confluent’s Premium Connector for Oracle Change Data Capture (CDC) Source is now available for Confluent Cloud. The fully managed connector enables users to capture valuable change events from an Oracle database and see them in real time within Confluent’s leading cloud-native Kafka service without any operational overhead.

New 99.99% uptime SLA for Apache Kafka provides comprehensive coverage for sensitive data streaming workloads in the cloud

One of the biggest concerns with relying on open source systems for business-critical workloads is reliability. Downtime is unacceptable for businesses operating in a digital-first world. It not only causes negative financial and business impact, but it often leads to long-term damage to a brand’s reputation.

Confluent now offers a 99.99% uptime SLA for both Standard and Dedicated fully managed, multi-zone clusters. Covering not only infrastructure, but Kafka performance, critical bug fixes, security updates, and more, this comprehensive SLA allows you to run even the most sensitive, mission-critical data streaming workloads in the cloud with high confidence.

Beyond reliability, engineering teams and developers face the challenge of understanding the new programming paradigm of stream processing and the different use cases it enables. To help jumpstart stream processing use cases, Confluent is introducing Stream Processing Use Case Recipes. Sourced from customers and validated by experts, this set of over 25 of the most popular real-world use cases can be launched in Confluent Cloud with the click of a button, enabling developers to quickly start unlocking the value of stream processing.

“ksqlDB made it super easy to get started with stream processing thanks to its simple, intuitive SQL syntax,” said Jeffrey Jennings, Vice President of Data and Integration Services, ACERTUS. “By easily accessing and enriching data in real-time with Confluent, we can provide the business with immediately actionable insights in a timely, consistent, and cost-effective manner across multiple teams and environments, rather than waiting to process in silos across downstream systems and applications. Plus, with the Stream Processing Use Case recipes, we will be able to leverage ready-to-go code samples to jumpstart new real-time initiatives for the business.”