Novee introduces autonomous AI red teaming to hunt LLM vulnerabilities

Novee today introduced AI Red Teaming for LLM Applications for its AI penetration testing platform, designed to uncover security vulnerabilities in LLM-powered applications before attackers can exploit them.

Enterprises are deploying AI-enabled software, from customer-facing chatbots to internal copilots and autonomous agents, and security teams are now facing a new class of risks, including prompt injection, jailbreak attempts, data exfiltration, and manipulation of agent behavior that traditional pentesting tools were never designed to detect.

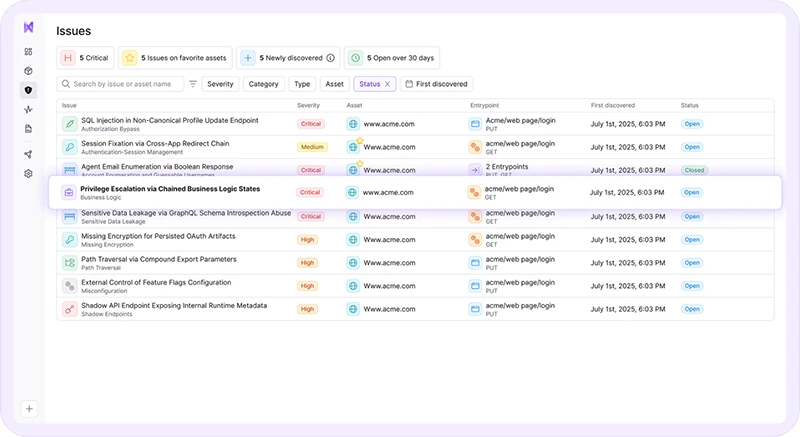

Unlike conventional application security tools built for web and infrastructure testing, Novee’s AI pentesting agent is specifically designed to continuously probe AI-enabled applications. The agent autonomously simulates real-world, sophisticated attack scenarios and chaining techniques together to identify vulnerabilities that manual testing or static scanners often miss.

Security teams can direct the agent at any AI-enabled application, including chatbots, copilots, autonomous agents, and LLM-powered workflows, to perform comprehensive security testing. The system evaluates how the application behaves under adversarial attacks and produces a vulnerability assessment with actionable remediation guidance.

“I’ve spent twenty years on the offensive side of cyber, inside government operations, protecting critical infrastructure, and now building AI systems that think like real attackers,” Ido Geffen, CEO of Novee. “What we see consistently is that attackers compress timelines dramatically. The window between vulnerability and exploitation can shrink to minutes. Defending against that requires continuous testing, not periodic assessments.”

Novee’s research team was at the helm of this product development, distilling techniques used to identify high-severity vulnerabilities into the AI tool. The research team recently disclosed a vulnerability affecting Cursor, allowing attackers to influence the context window of a coding agent and achieve full remote code execution on the developer’s workstation. Novee has additional findings currently under responsible disclosure with other vendors. Findings from this ongoing research are fed directly into the agent’s training so that it can continue to understand how real attackers find and exploit new AI vulnerabilities and weaknesses.

“AI applications introduce an entirely new attack surface, but most organizations are still testing them with tools designed for web applications and infrastructure,” said Gon Chalamish, CPO of Novee. “Attackers are already adapting their techniques for AI systems. Security teams need a way to test those systems the same way adversaries attack them.

The agent is designed to work with any LLM-powered application regardless of the underlying model provider or architecture, including deployments built on OpenAI, Anthropic, or open-source models. It can also integrate into existing security testing workflows and CI/CD pipelines, allowing organizations to test AI-enabled applications as part of their broader development and security processes.

Novee’s AI pentesting agent is currently available in beta and the company will demonstrate the technology during RSAC 2026 Conference 2026 at booth S-0262. To learn more and schedule a meeting, go here.