SCW Trust Agent: AI tracks AI influence in code to reduce software risk

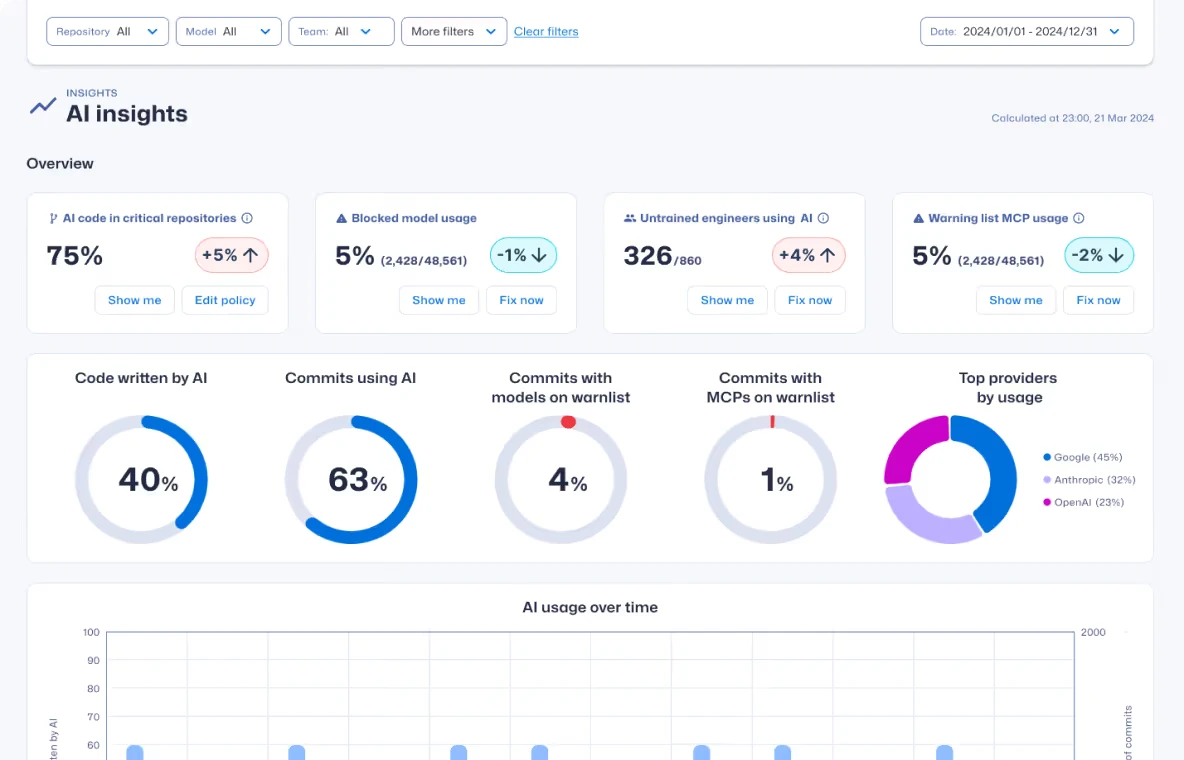

Secure Code Warrior has announced SCW Trust Agent: AI, a governance solution designed to make AI influence in software development visible, attributable, and enforceable at the point of commit, enabling enterprises to scale AI coding tools with measurable control over software risk. Organizations can trace which AI models influenced specific commits, correlate that influence with vulnerability exposure, and take corrective action before insecure code reaches production.

According to Sonar’s 2026 State of Code Developer Survey, 72% of developers report using AI coding tools in their development processes every day. Yet most enterprises lack visibility into how those tools influence production code, creating governance blind spots as development velocity accelerates. According to Gartner, by the end of this year, at least 80% of unauthorized AI transactions will result from internal policy violations rather than malicious attacks, underscoring the need for enforceable oversight inside development environments.

Secure Code Warrior is defining AI software governance with SCW Trust Agent: AI. By embedding commit-level visibility and enforceable oversight into development workflows, the platform enables organizations to scale AI-driven development with measurable control over software risk while reinforcing secure coding behavior across both human and AI–generated code.

“SCW Trust Agent: AI provides organizations the quantitative pathway to effectively measure the risk posture of their development environment in the AI era, whether the contributing ‘developer’ is human or AI,” said Pieter Danhieux, CEO, Secure Code Warrior.

“Beginning with comprehensive observability and traceability of AI-generated coding, MCP and AI tool usage, SCW Trust Agent: AI creates a foundation for more effective, adaptive learning that hones in with precision on the most relevant areas and fundamentally changes behavior among development teams, offsetting the introduction of AI-enabled vulnerabilities over time,” Danhieux continued.

SCW Trust Agent: AI moves organizations beyond passive visibility into active, operational governance by connecting:

- AI usage visibility: Maintain a verifiable record of which LLMs, including sanctioned and “shadow AI” models, influenced specific commits, supporting governance and audit requirements without storing source code or prompts.

- Proprietary LLM security benchmarking: Leverage Secure Code Warrior’s LLM security benchmark data to evaluate models and enforce approved AI usage policies based on measurable security performance.

- MCP discovery and supply chain insight: Track which Model Context Protocol (MCP) servers are installed and active to prevent AI agents from accessing sensitive internal tools or databases through unvetted or risky connections.

- Commit-Level Risk Correlation and Enforcement: Correlate developers’ skill sets (as measured by SCW Trust Score) and their AI usage with vulnerability benchmarks to identify the risk level, and enforce policy before code reaches production.

- Adaptive learning: Mitigate risk by correlating AI-generated code and contributor secure coding skill to automatically deliver the most relevant training to developers and build secure coding proficiency.