NVIDIA puts GPU orchestration in community hands

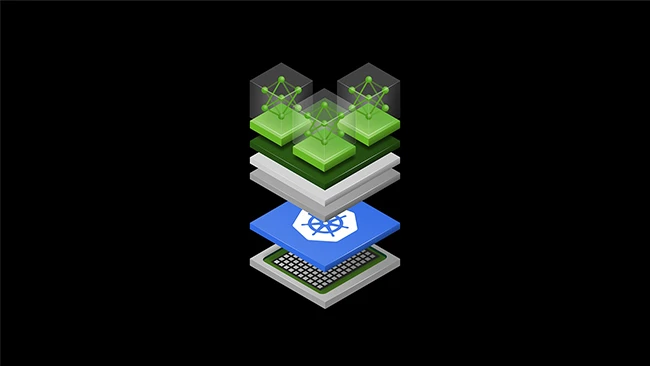

GPU-accelerated AI workloads now run on Kubernetes in the large majority of enterprise environments. Managing those workloads at scale has required specialized tooling that, until now, remained under vendor control. NVIDIA moved to change that at KubeCon Europe in Amsterdam this week, donating its Dynamic Resource Allocation (DRA) Driver for GPUs to the Cloud Native Computing Foundation (CNCF).

The transfer shifts ownership of the driver from NVIDIA to the broader Kubernetes project community. Developers across companies will now be able to contribute directly to the codebase, propose changes, and influence the direction of GPU scheduling in Kubernetes without routing requests through a single vendor.

What the driver does

The DRA Driver for GPUs sits between Kubernetes and the underlying GPU hardware, handling how compute resources get allocated to containerized workloads. The donated version supports several capabilities relevant to large-scale AI infrastructure: GPU sharing through NVIDIA Multi-Process Service and Multi-Instance GPU technologies, native support for multi-node NVLink interconnects used in Grace Blackwell systems, dynamic reconfiguration of hardware resources during runtime, and fine-grained requests for specific memory settings or interconnect arrangements.

Confidential containers for AI workloads

Alongside the DRA donation, NVIDIA announced GPU support for Kata Containers, developed in collaboration with the CNCF’s Confidential Containers community. Kata Containers are lightweight virtual machines that act like containers, providing stronger workload isolation than standard container runtimes.

Adding GPU support to Kata extends hardware acceleration into that isolated environment, which allows AI workloads to run with additional protections around data confidentiality. Organizations looking to implement confidential computing for sensitive inference or training jobs gain a path to do so within the existing Kubernetes ecosystem.

Industry backing

The DRA donation comes with participation from several large cloud and infrastructure vendors. Amazon Web Services, Broadcom, Canonical, Google Cloud, Microsoft, Nutanix, Red Hat, and SUSE are collaborating with NVIDIA on the effort.

Chris Aniszczyk, CTO of CNCF, commented on the significance of the move: “NVIDIA’s deep collaboration with the Kubernetes and CNCF community to upstream the NVIDIA DRA Driver for GPUs marks a major milestone for open source Kubernetes and AI infrastructure. By aligning its hardware innovations with upstream Kubernetes and AI conformance efforts, NVIDIA is making high-performance GPU orchestration seamless and accessible to all.”

Red Hat CTO Chris Wright connected the donation to enterprise AI strategy, noting that open source brings standardization to the infrastructure components that fuel production AI workloads. Ricardo Rocha, lead of platforms infrastructure at CERN, pointed to the value for scientific computing, where community-driven tooling helps organizations process petabytes of data across both traditional and machine learning workloads.

Additional open source projects

Several other projects tied to AI infrastructure security and agent management were noted at KubeCon. NVIDIA OpenShell, announced at GTC last week, is a runtime for running autonomous agents with fine-grained programmable policy controls. It integrates natively with Linux, eBPF, and Kubernetes, and is designed to allow operators to define security and privacy boundaries for agentic workloads at a granular level. NVSentinel, a GPU fault remediation system, and AI Cluster Runtime, an agentic AI framework, were also announced at GTC.

On the orchestration side, NVIDIA released Grove, an open source Kubernetes API for managing AI workloads across GPU clusters. Grove lets developers define complex inference systems in a single declarative resource and is being integrated with the llm-d inference stack for use across the Kubernetes community. Grove extends the Dynamo 1.0 ecosystem, which NVIDIA released earlier.

Security relevance

The combination of confidential container support, OpenShell policy controls, and GPU-level workload isolation addresses a gap that security teams have tracked in GPU-accelerated Kubernetes environments: the absence of hardware-backed isolation for AI inference jobs handling sensitive data.

Kata Containers with GPU support gives operators a mechanism to enforce stronger boundaries around workloads at the hardware level, using existing Kubernetes primitives. OpenShell adds a layer of programmable controls for agentic workloads, where runtime behavior can be harder to constrain through conventional access management policies. Together, these tools extend security tooling into areas of the AI stack that standard container security controls have not previously reached.