4 steps for building an orchestrated authorization policy for zero trust

There is a great deal of emphasis placed on the zero-trust approach with respect to access. Looking beyond authentication (the act of verifying that someone is who they say they are), evaluating authorization is just as important as it determines what someone can do with that access. Policies must be written to account for this, and the strongest policies are built on an authorization model that is orchestrated in nature.

An orchestrated and centralized approach to authorization builds dynamic and fine-grained access control (FGAC) policies that meet the demands of modern security strategies including zero trust. Taking a zero-trust approach to policy building eliminates absolute trust from the equation by continuously validating users at all layers of the application based on a set or combinations of attributes collected from multiple sources (i.e., who, what, where, when, why, how, etc.).

The concept of authorization is not new, and many organizations have built ad-hoc policies in the past as they created or adopted new applications, isolated within each application. Once created, they were often forgotten about. Shifting to an orchestrated approach to authorization removes the siloed approach and replaces it with a centralized, overarching view of policies. This means organizations do not create new policies from scratch as every new application is added. Instead, they select from existing policies, copying and modifying as needed to benefit from past work. Additionally, because it is centralized, when a change to an existing policy must be made, it can be done in that same “one to many” approach, thus saving valuable time and ensuring better security.

A policy based on centralized authorization orchestrated across an enterprise requires several key elements obtained through the policy modeling process. There are four key steps to this process.

Identifying applications

The very first step an organization needs to take when moving to this model is to identify how many applications or data sources require a zero-trust approach. This is important as it forces a triage of sorts of which applications and data sources are the most crucial and should be the initial targets for deployment. Most enterprise-sized organizations now use hundreds of applications. Going from zero to sixty in terms of applying policies to ALL of them right away is not realistic. The identification step is all about determining which are the most important to tackle first. Others are added over time and as more policies are built, doing so will become much more efficient. This first step enables a “start small, grow fast” approach.

Determining requirements

The next step is determining what the requirements are. During this phase of the project, it is critical for security and/or identity leaders (who own this centralized process) to involve business stakeholders (application or product owners) from the start.

The requirements must be driven by the business. Now, some might point out that the business may not understand the intricacies of identity and access management (IAM) solution capabilities and protocols, but they do understand the information their application accesses and how that information is impacted by compliance regulations. Therefore, based on the guidance provided by the business, requirements should be written in a natural language that is easily understood by both the business and the IT team.

Considering attributes

Now that requirements are established, identifying the right attributes needed to inform authorization policies and controls is the next logical step.

For example, let’s say one of the requirements for a certain application or data source is that no user outside of the European Union (EU) should be able to see personally identifiable information (PII) from customer data where the customer’s country of origin is in the EU. Right away, we can consider a few attributes that will be needed for this requirement, one being the physical location of the user requesting access to the application, and the second being the country of origin of the record the user is looking to access. There would likely be other attributes that could be considered such as the user’s role, what device they are on, user’s risk score, time of day and so on, but this gives you an idea of the type of attributes that can be considered.

Of note, there is no limit on how many attributes an organization can consider or the complexity of a policy in terms of the requirements you are trying to achieve, so the goal is to identify the right attributes (quality versus quantity) to effectively balance security with performance. Context is the key consideration and that can be deciphered based on the attributes evaluated. For instance, considering the physical location of a user along with the time of the request can provide a great deal of context that may not be considered otherwise.

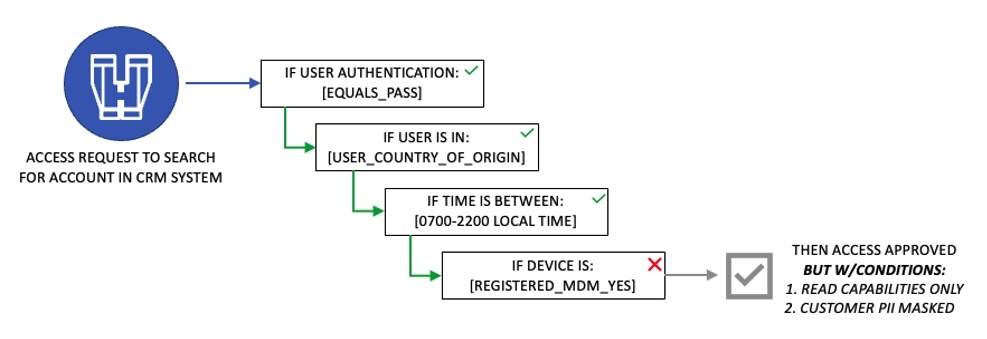

Here’s an example:

Authoring policies

The final step of a centralized, orchestrated approach – and where the concept of zero trust comes into action – is in policy authoring. With the first three steps completed, now it’s time to write the policies to be deployed across the organization.

These policies can be as simple or as complex as needed to achieve overall organizational goals. A policy follows the pattern of writing IF, THEN statements about the subject, the resource or object and the action. It’s important to avoid any confusion here around other IAM solutions that may leverage role-based access control (or RBAC) as part of their authentication process. While important, what this step refers to is a policy that comes into place after the authentication has occurred. There are many instances when a user can be authenticated but a policy may limit what action they can take or what information they can see. Considering the example included under the last step, the policy might look something like this:

Authoring policies should create a decision tree that could still enable business to be conducted under the right conditions while protecting sensitive information. In that context, here’s an example of what could be done for the same policy where the answer to one of the questions is no.

The bottom line is the core of the zero-trust methodology is to never trust and always verify. By leveraging a centralized and orchestrated approach to authorization, security and/or identity leaders can easily craft policies that are as complex or as simple as needed. Using this approach ensures the organization is verifying as many attributes as needed, providing the correct context to make an accurate and reasonable access decision.