Researchers uncover AI-powered vishing platform

A vishing-as-a-service platform that helps scammers carry out so-called “press 1” scams is misusing text-to-speech (TTS) capabilities provided by AI voice technology company ElevenLabs, Mirage Security researchers claim.

How “press 1” vishing scams work

For “press 1” scams, fraudsters spoof phone numbers of trusted institutions (e.g., bank), call up potential victims and try to scare them with pre-recorded messages into sharing sensitive information.

When impersonating banks, for example, the fraudsters first play a message that claims the users’ account has been compromised or that the bank has spotted fraudulent transactions tied to it, and instruct potential victims to press “1” on their phone keyboard if they want to resolve the issue.

Those who do are connected to a scammer posing as an employee of the institution, who then carries the scam through to completion or until the victim catches on.

With the P1 (p1bot.io) platform, the scammers don’t have to talk to the potential victims during a live call – they move the scam along by playing messages that have been pre-recorded with natural AI-generated voices.

“We’ve seen open-source OTP bot kits on GitHub that wire together ElevenLabs and Twilio, and academic researchers demonstrated a fully automated AI vishing system (ViKing) using the same stack in a controlled study,” says Mirage Security CEO Ross Lazerowitz.

“But p1bot represents something different: a commercial, subscription-based platform where ElevenLabs is embedded as a first-class feature, not bolted on by individual operators. The integration is polished, the voice catalog is curated, and the workflow is designed to lower the barrier for anyone willing to pay.”

How p1bot streamlines vishing attacks

Would-be scammers register to use p1bot via a Telegram bot, pay $399 per month via a OxaPay crypto payment gateway, and get access to the web-based dashboard.

At its core, p1bot is a browser-based softphone, Mirage Security found.

Via its interface, the operators can spoof phone numbers, generate AI voice prompts, place calls via WebRTC, capture DTMF tones (i.e., the sounds produced when the victim is pressing keys on a phone), record calls, and play pre-generated audio clips mid-call to simulate automated interactive voice response (IVR) systems.

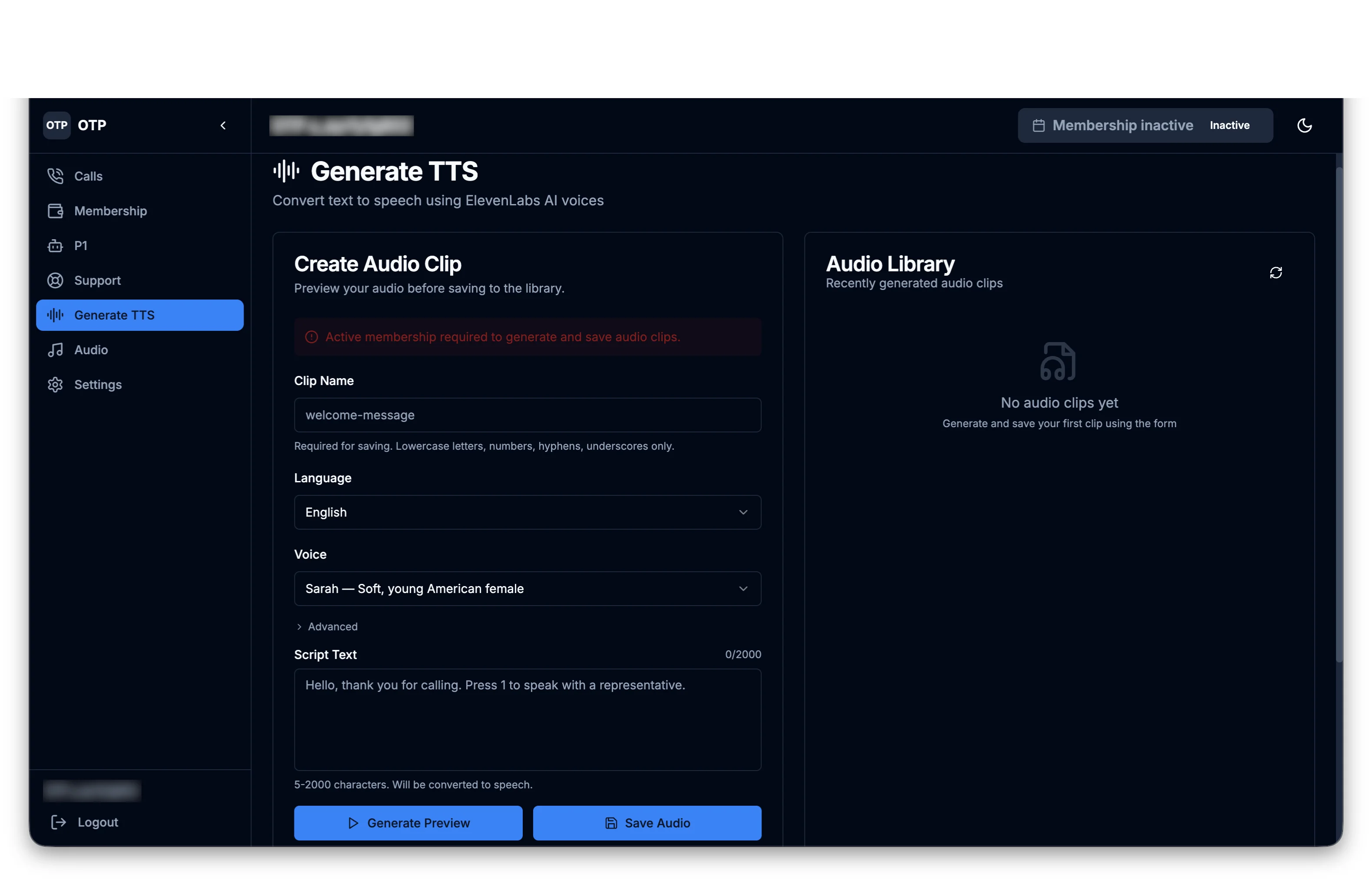

Operators create fake IVR clips via the “Generate TTS” page, and save them in the Audio Library so they can use them during live calls.

The Generate TTS page (Source: Mirage Security)

“[The platform] ships with a hardcoded catalog of ElevenLabs voice IDs: 15 English, 4 French, and 4 Spanish voices, each mapped to a real ElevenLabs voice profile,” Lazerowitz says.

The platform-provided APIs are backed by p1bot’s accounts, he told Help Net Security, but operators with their own ElevenLabs account can extend the platform’s voice capabilities (e.g., paste custom voice IDs).

Exposed JavaScript helped researchers dissect p1bot

Lazerowitz told us that they managed to discover all of this because the platform’s client-side JavaScript was not obfuscated or access-restricted, which allowed them to analyze the application logic, API integrations, and embedded credentials without creating accounts or placing any calls.

They’ve also looked at the diagnostic log messages left enabled in the production version of the app, and found many indicators that point to the platform having been built with the help of coding AI assistants.

The integration of ElevenLabs’ voices into the platform means that criminals are not developing new AI capabilities, Lazerowitz noted, but subscribing to the same commercial services as everyone else.

“When we reported our findings to ElevenLabs, their security team responded quickly, investigated the abusive accounts, and took action. That kind of responsiveness, combined with the traceability features ElevenLabs has built into their platform, demonstrates that disruption is possible when researchers and vendors work together,” he concluded.

ElevenLabs monitors user-generated content to detect harmful activities and accepts reports of concerning content. When abuse is detected, they ban accounts and – when required by law – they report them to government authorities.

Help Net Security has reached out to ElevenLabs for a comment on Mirage Security’s report, but they declined to comment on this specific case.

Subscribe to our breaking news e-mail alert to never miss out on the latest breaches, vulnerabilities and cybersecurity threats. Subscribe here!