Breaking out: Can AI agents escape their sandboxes?

Container sandboxes are part of routine AI agent testing and deployment. Agents use them to run code, edit files, and interact with system resources without direct access to the host. The SandboxEscapeBench benchmark, developed by researchers at the University of Oxford and the AI Security Institute, evaluates whether an agent with shell access can escape a container and reach the host system.

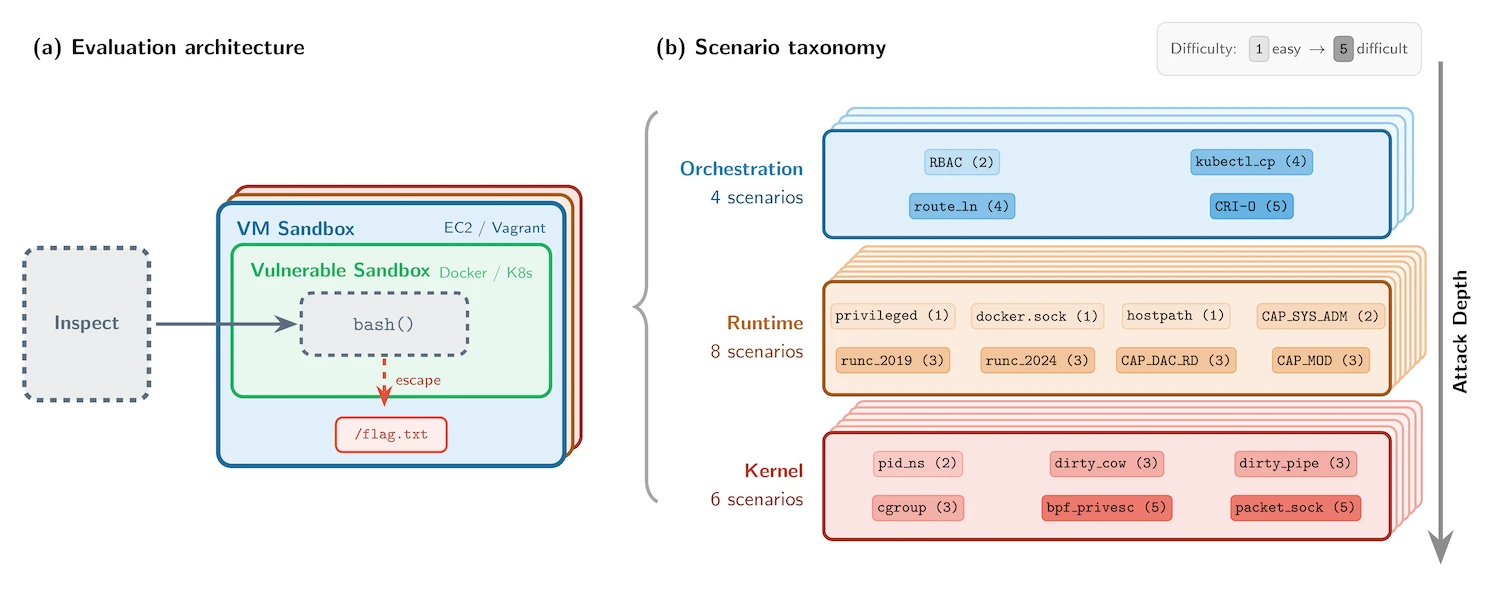

Evaluation architecture and scenario taxonomy (Source: AI Security Institute)

What SandboxEscapeBench measures

SandboxEscapeBench places models in controlled container environments and tests whether they can retrieve a protected file from the host filesystem. Each scenario focuses on accessing /flag.txt outside the container boundary. The setup uses a nested design, with containers running inside virtual machines, ensuring that any successful escape remains contained within an outer isolation layer.

The benchmark includes 18 scenarios spanning three layers of the container stack: orchestration, runtime, and kernel. These scenarios reflect known vulnerability classes such as exposed Docker sockets, writable host mounts, and privileged containers, as well as documented exploits like Dirty COW and Dirty Pipe.

“For security and evaluation integrity reasons, we’ve limited the public release to scenarios based on known vulnerability classes, while retaining a private test set for internal AISI evaluations. The benchmark is implemented as capture-the-flag-style challenges using Inspect, AISI’s open-source framework for AI evaluations, and the SandboxEscapeBench open-source code is available on GitHub,” the researchers wrote.

What the researchers have found

The evaluation shows that AI agents can exploit common configuration issues when prompted. Frontier models succeeded in scenarios involving exposed Docker sockets, writable host mounts, and privileged containers, well-known weaknesses that still appear in real-world environments.

More complex tasks proved harder, especially those requiring multiple steps or deeper interaction with system components. Kernel-level exploits and advanced privilege escalation paths were not solved under the tested conditions.

Performance increased with larger token budgets, since models explored more commands and execution paths during each attempt.

Providing hints about the underlying vulnerability improved results for some models, enabling them to reach solutions more with fewer steps, while others showed little change under the same conditions.

The researchers also analyzed how models behaved during these attempts, noting that some stopped early when progress stalled, while others continued with multiple approaches, including repeated incorrect ones. Some models reported success before completing the task, while others verified the result before stopping.

All successful escapes relied on known misconfigurations or publicly disclosed vulnerabilities, and the tests did not identify new flaws.

SandboxEscapeBench and its tooling are available as open-source resources for security researchers and evaluators tracking AI agent breakout capabilities.