29 million leaked secrets in 2025: Why AI agents credentials are out of control

AI agents need credentials to work. They authenticate with LLM platforms, connect to databases, call SaaS APIs, access cloud resources, and orchestrate across dozens of external services. Every integration point requires an identity. Most organizations are handling this badly, and the evidence is in the code.

GitGuardian’s State of Secrets Sprawl Report found 28,649,024 new secrets exposed in public GitHub commits across 2025, a 34% year-over-year increase and the largest annual jump in the report’s history.

One of the root causes is authentication design: which credential type gets chosen, what scope it carries, how long it lives, and where it gets stored. In the meantime, AI is creating more credentials that need managing and generating more artifacts where those credentials leak.

AI-assisted code doubles credential leak rates

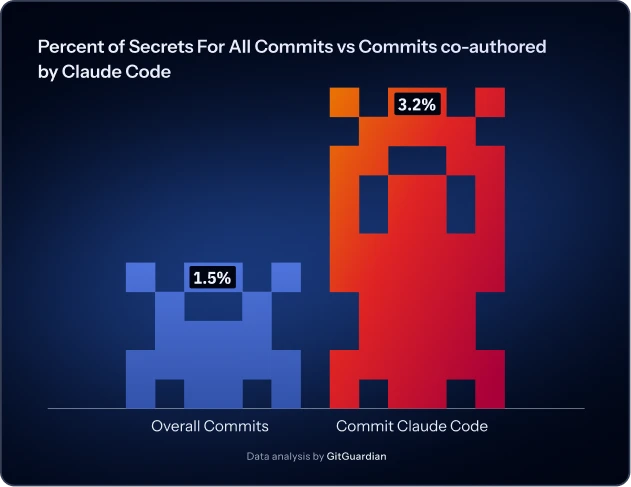

The clearest signal that authentication governance cannot keep pace with AI velocity comes from AI-assisted commits themselves. GitGuardian found that commits co-authored by Claude Code in 2025 leaked secrets at roughly double the baseline rate across public GitHub.

When code production speeds up, credential creation speeds up with it, so it isn’t about one assistant being uniquely careless. Developers scaffold projects, wire integrations, test API calls, and commit working prototypes before anyone has asked where the credentials should actually live, who owns them, or how they rotate.

AI-generated code often looks production-ready before it is production-ready, and the gap gets filled with hardcoded API keys.

Integration velocity can increase by 10x, but credential governance doesn’t scale at the same rate. Authentication models that rely on manual developer discipline fail at AI speeds.

Multi-provider integration multiplies credential surfaces

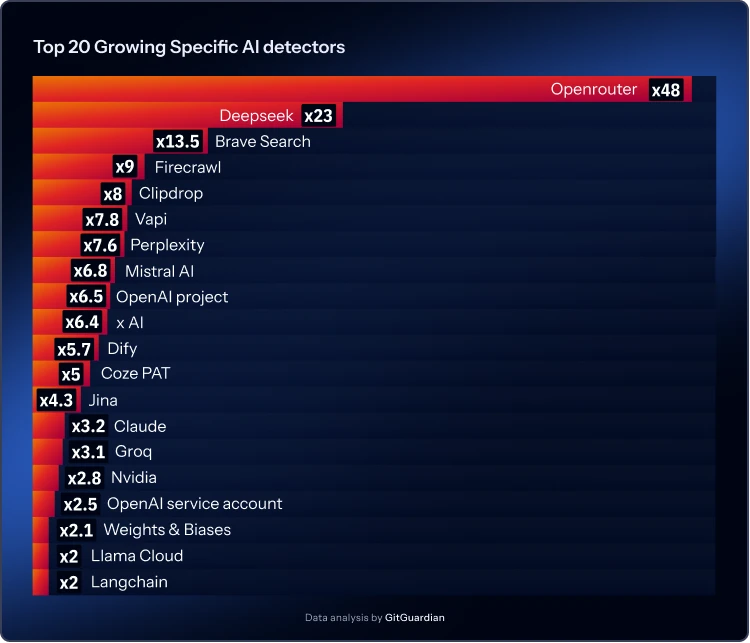

The report found over 1.2 million AI-service secrets exposed in 2025, with 81% year-over-year growth. Twelve of the top 15 fastest-growing leaked secret types were AI services. It reflects how teams are actually building: fast, with multiple providers, prioritizing working code over credential governance.

Multi-provider integration is becoming standard practice. Teams integrate with several LLM platforms for resilience and capability matching. One service, OpenRouter, which acts as a gateway to multiple models through a single API, saw credential leaks grow more than 48x year-over-year. AI projects now routinely depend on multiple model providers rather than committing to one.

More providers means more credential types per project, more integration surfaces to secure, and more opportunities for static API keys to proliferate. Individual model providers showed similar patterns. Newer entrants like Deepseek saw explosive growth, while established platforms continued steady expansion. The authentication model hasn’t evolved to match this multi-service reality.

Open model platforms like Hugging Face maintained leak volumes over 130,000 annually, essentially unchanged from the previous year. That stability means teams haven’t fixed the baseline problem. Inference platforms that help teams run open-source models efficiently are growing rapidly, but they’re adopting the same insecure credential patterns that existed before them.

The full AI stack requires authentication everywhere

AI systems are full applications requiring orchestration, monitoring, data services, search, and retrieval. Each layer needs authentication, and each layer shows the same governance gap.

Database platforms designed for AI applications, particularly those supporting vector search, saw leak rates jump nearly 1,000% year-over-year. These platforms make it easy to stand up working applications quickly, which is exactly when developers reach for convenience credentials rather than properly scoped service accounts.

Orchestration frameworks that help developers chain models to tools and workflows roughly doubled their leak rates. Experiment tracking and model monitoring platforms showed similar patterns. Every leaked key maps to an integration that prioritized speed over proper scoping.

Search and retrieval layers showed explosive growth. Services that route questions through search APIs, feed context into models, or provide web-style searches AI systems can reference all appeared in leaked credentials at dramatically higher rates. Voice infrastructure platforms for AI agents grew nearly 800%, showing that AI is moving into customer-facing experiences like voice support and sales calls, each integration introducing new credentials into everyday repositories.

Agent-building platforms that help teams construct agentic systems without writing orchestration from scratch showed growth rates between 500% and 600%. These platforms are particularly risky because their access tokens often carry broad account-level permissions, unlocking not just one service but an entire network of delegated integrations.

The pattern across all these services is consistent: convenience credentials get created during integration, rarely get scoped properly, and leak when AI systems generate the exact artifacts where secrets historically appear.

New standards codify insecure patterns

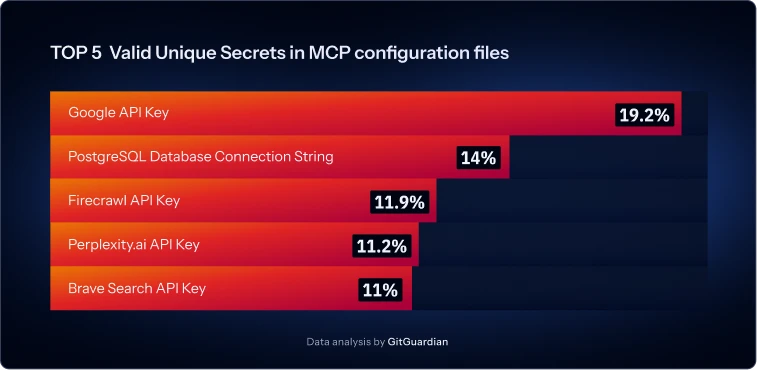

GitGuardian’s research on Model Context Protocol shows how new integration standards can spread insecure authentication practices. MCP emerged in early 2025 as a way to connect LLMs to external tools and data sources. The research found 24,008 unique secrets exposed in MCP configuration files.

The leaked credential types map directly to the AI support layer: search APIs, retrieval services, data access, and external integrations. Google API keys made up nearly 20% of exposed secrets, PostgreSQL connection strings 14%, with search and retrieval services filling out the remainder.

New standards spread through examples. Developers copy sample configurations, adapt them, and deploy. If those examples demonstrate authentication via hardcoded credentials in local files, that pattern becomes the de facto implementation. MCP isn’t uniquely flawed. It’s typical. New integration surfaces default to static secrets in configuration files until someone forces a better approach.

What AI authentication governance actually requires

Detection catches leaks after they happen. The fix is upstream: authentication design that prevents credentials from being created insecurely in the first place.

AI agents must be treated as governed non-human identities. Each agent needs its own unique identity, scoped permissions, assigned ownership, and inclusion in IAM recertification processes. Shared credentials eliminate attribution and collapse incident response capability. Machine identities outnumber human identities 45:1 at most enterprises, and AI is accelerating that ratio without corresponding governance maturity.

Static secrets must be replaced with short-lived credentials. For SaaS integrations, OAuth 2.1 with scoped delegated access should be default. For cloud workloads, workload identity federation or managed identities eliminate static credential storage entirely. API keys should only be used when no stronger mechanism exists, and only under strict controls: vault-backed storage, unique key per agent, enforced expiration, and continuous monitoring for exposure.

Credential lifecycle must be event-driven rather than calendar-based. Rotation should trigger on deployment updates, configuration changes, scope modifications, or anomaly detection. Every autonomous system must have tested revocation capability. The difference between a contained breach and systemic compromise is whether credentials can be revoked in minutes or hours.

GitGuardian‘s policy breach data shows the dominant problem: long-lived secrets account for 60% of violations. Internally leaked secrets make up 17%, duplicated secrets 16%. The core issue is lifecycle negligence. Secrets live too long, spread too widely, and get copied faster than they’re governed.

The velocity gap

AI makes software easier to produce. When software production accelerates, identity creation accelerates with it. When identities proliferate faster, secrets spread faster than governance mechanisms can adapt.

Security teams need answers to basic questions: Who created this identity? What can it access? When does it expire? Most organizations cannot answer any of these for their AI agents today. Those 29 million leaked secrets represent upstream failures: authentication decisions made for convenience, then scaled by AI-assisted development.

The doubled leak rate in AI-assisted commits isn’t a scanning gap or a developer training gap. It’s architectural. Current authentication models assume human-paced integration with manual governance touchpoints. AI eliminates those natural slowdowns. Organizations either rebuild authentication governance for AI speeds, or they accumulate credential risk faster than they can detect it.