Microsoft details AI prompt abuse techniques targeting AI assistants

Prompt abuse occurs when crafted inputs manipulate an AI system into producing unintended behavior, such as attempting to access sensitive information or overriding built-in safety instructions.

Prompt injection is also recognized as one of the top risks in the 2025 OWASP guidance for LLM applications.

“Detecting abuse is challenging because it exploits natural language, such as subtle differences in phrasing, which can manipulate AI behavior while leaving little or no obvious trace. Without proper logging and telemetry, attempts to access or summarize sensitive information can go unnoticed,” the company said.

Prompt abuse attack patterns

Prompt abuse includes inputs designed to push systems beyond their intended boundaries, with outcomes ranging from data exposure to altered outputs.

Direct prompt override pushes a system to ignore its rules, safety policies, or system prompts. These inputs are structured to bypass guardrails or surface restricted information.

Extractive prompt abuse targets sensitive inputs and seeks to expose information that should remain restricted, including the contents of protected files or datasets.

Indirect prompt injection embeds hidden instructions inside external content such as documents, webpages, emails, or chat messages. When processed as input, these instructions can alter summaries, introduce bias, or trigger unintended actions.

Microsoft describes a scenario where a finance analyst receives a link to what appears to be a trusted news site. Nothing looks unusual. The issue sits in the URL, where a fragment contains hidden instructions that are not visible to the user but are still included in the system’s prompt.

After the analyst requests a summary, the tool processes the link and incorporates that hidden text. The result can be misleading or incomplete, even though the user has not entered anything unsafe.

This type of prompt injection does not rely on code execution or direct system access. It changes how information is interpreted. The output can still look reliable, which makes the issue harder to spot and allows it to influence decisions and workflows.

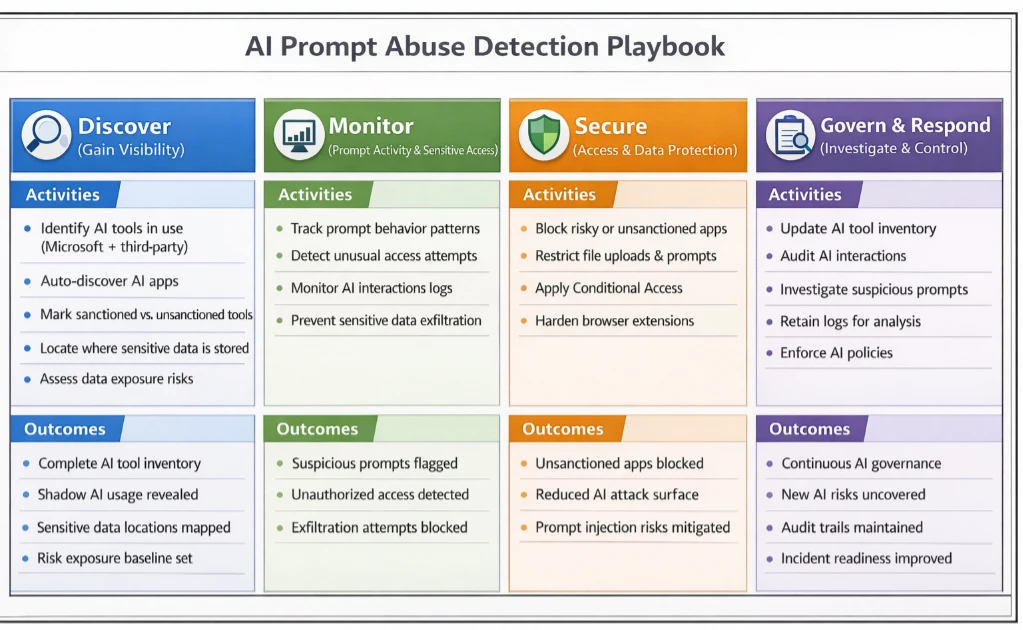

Prompt abuse detection playbook

To address these risks, Microsoft introduces a detection and response playbook that maps how prompt abuse can unfold across a typical workflow.

Source: Microsoft Incident Response AI Playbook

Using these security tools, organizations can turn logged interactions into actionable insights that reveal suspicious activity, provide context, and support measures to protect sensitive data.

“Combining monitoring, governance, and user education helps organizations maintain reliable AI outputs while identifying manipulation attempts early,” the company wrote.