Who owns AI agent access? At most companies, nobody knows

AI agents are operating across production enterprise environments at scale, and the identity infrastructure managing their access has not kept up with their deployment.

A January 2026 survey of 228 IT and security professionals, conducted by the Cloud Security Alliance, finds that the majority of organizations have AI agents active in core systems, with fragmented ownership of how those agents authenticate and what they can access.

Agents are embedded in production systems

Task-automation agents are in use at 67% of surveyed organizations. Data retrieval and research agents are deployed at 52%, and both code-generation and security or monitoring agents are each reported at 50%. Infrastructure and IT operations agents are running at 41% of organizations. Only 15% of respondents say their organizations do not use AI agents in production environments.

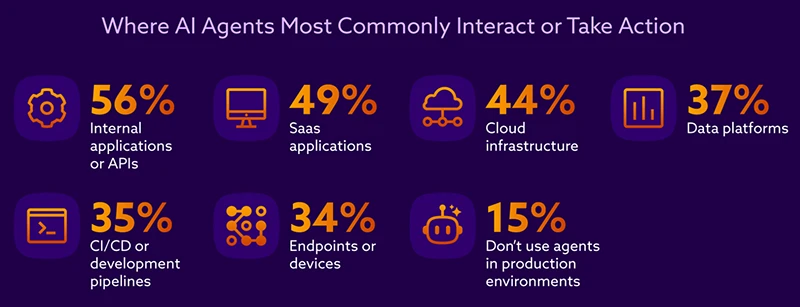

Agents interact most commonly with internal applications or APIs (56%), followed by SaaS applications (49%) and cloud infrastructure (44%). More than a third report agent activity within CI/CD or development pipelines (35%) and data platforms (37%).

Seventy-three percent of respondents expect AI agents to become very important or critical to their organizations within the next 12 months.

Identity assignment is fragmented

When an AI agent performs an action, 52% of organizations represent that agent using an application or workload identity. Forty-three percent use a shared or generic service account, 36% assign the agent its own dedicated identity, and 31% allow agents to operate under a human user’s identity. Twelve percent are unsure how the identity is represented. Multiple approaches frequently coexist within the same organization.

Most organizations cannot clearly distinguish between actions performed by AI agents and those performed by humans. Across the same organizations, different teams describe AI agents in inconsistent ways.

No single team owns the problem

Responsibility for determining how AI agents authenticate and access systems is split across security, development, engineering, and IT teams, with no single function holding clear ownership. Identity and IAM teams are rarely designated as the primary owner, and a portion of organizations report no identifiable owner at all.

When an AI agent takes an unintended or undesired action, 28% of organizations assign responsibility to security or IT, 25% to development or engineering, and 18% to business or product owners. Fifteen percent are unsure who is responsible.

Access controls show measurable gaps

Fifty-seven percent of respondents report moderate or high confidence that AI agents in their organizations have appropriately scoped access to systems and data. The underlying practices tell a more complicated story.

A significant share of respondents are unsure how frequently credentials used by AI agents are rotated or refreshed, and a smaller portion report that rotation rarely or never occurs. Access control frameworks are applied very consistently to AI agents at only a minority of organizations, and a notable share report either no consistency or uncertainty about their own practices.

Authentication and credential handling for a typical AI agent requires between one and ten days of engineering effort at 43% of organizations. Thirty-two percent are unsure of the time required.

Agents inherit permissions, not their own

At most organizations, AI agent access is determined by predefined automation logic or by the permissions of the human requesting the action. A minority of organizations base access on the agent’s own permissions.

The majority of respondents say AI agents inherit access originally intended for a human or another system at least sometimes. Most agree that agents often receive more access than necessary to complete their tasks, and a similarly large share agree that agents introduce access pathways that are difficult to monitor.

Eighty-one percent agree that prompt manipulation could cause an AI agent to reveal sensitive credentials or tokens.

Among open-ended responses, over-privileged access or excessive permissions is the most frequently cited concern at 24%, followed by lack of visibility into AI agent behavior at 19%.

Governance fills gaps left by identity controls

Organizations currently rely on policy-based restrictions and human approval or review steps to control high-impact or sensitive AI agent actions. Logging and post-action monitoring only is reported by 39%, and runtime checks or validations by 32%.

For revoking or limiting an agent’s access, the most common mechanisms are disabling the agent’s identity and revoking active session tokens. A large share report terminating the compute environment the agent runs on. Fewer organizations can remove or modify access policies in real time, and a small portion report no revocation mechanisms at all.

Practitioners point to visibility and identity separation as priorities

When asked which capabilities would most improve their organizations’ ability to safely scale AI agents, 52% selected real-time visibility into agent actions. Forty-five percent selected clear identity separation between AI agents and humans. The ability to grant per-task, short-lived access was selected by 32%, and standardized authentication methods by 30%.

The majority of respondents expect preventing over-privileged agents to become a more significant challenge as agentic AI expands. Large shares also expect distinguishing between human and agent activity to become harder, and managing secrets, credentials, and access patterns across multiple environments each rank as growing concerns.

Webinar: The True State of Security 2026