Cybersecurity professionals are burning out on extra hours every week

Cybersecurity professionals in the U.S. are working an average of 10.8 extra hours per week beyond their contracted schedules, according to survey data collected from 300 cybersecurity and IT leaders by Sapio Research. That figure effectively adds a sixth working day to the standard week for a large portion of the field. Nearly half of respondents reported working 11 or more overtime hours weekly, and one in five logged more than 16 additional hours.

The psychological strain is measurable. Nearly half of respondents said their job feels emotionally exhausting more often than it feels rewarding, a sentiment most pronounced among C-level executives. A significant share said they are unable to take time off without returning to a significant backlog of stress, and roughly a third reported weekly anticipatory anxiety about the upcoming work week.

Despite this sustained pressure, 94% of respondents said they would choose cybersecurity again as a career, and the majority said they would do so without hesitation.

AI-driven pressure for non-technical skill master (Source: Seemplicity)

The skills profile is shifting

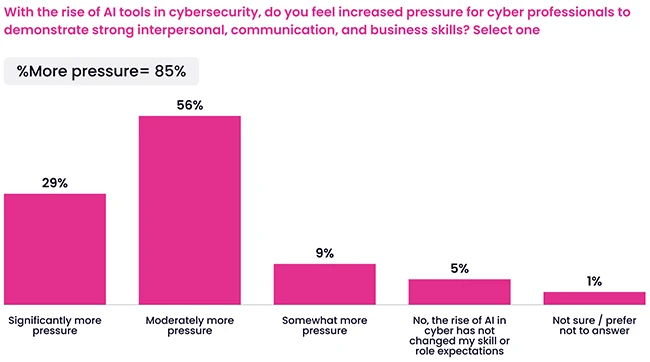

More than eight in ten cybersecurity leaders said that people skills, including communication, influence, and stakeholder management, are more central to their effectiveness now than they were five years ago. The adoption of AI tools is accelerating that shift, with the large majority of leaders reporting increased pressure to strengthen interpersonal, communication, and business skills as a direct result.

The perceived importance of people skills varies by organization size, with leaders at smaller enterprises identifying this shift at a higher rate than their counterparts at large enterprises. Most respondents said their role now requires significant cross-functional collaboration and alignment with broader business strategy.

Governance outranks engineering

When leaders were asked which capabilities will define the cybersecurity professional of the future, AI oversight and governance ranked first at 73%. Technological and engineering proficiency came in second, followed by cross-functional communication and business strategy and leadership, each cited by roughly half of respondents.

The data points to a profession shifting away from manual technical execution. Practitioners are increasingly expected to manage automated systems, audit AI outputs, and connect security decisions to organizational objectives.

Many organizations are adding AI governance responsibilities to security leaders without adjusting the underlying job structure. “Layering on AI oversight responsibilities without redesigning how teams are organized just accelerates burnout. The org chart itself needs to be reworked.” Ravid Circus, CPO at Seemplicity, told Help Net Security. “The org chart itself needs to be reworked.”

Circus said dedicated AI governance functions will need to be embedded within security teams as operational roles with defined accountability. That includes formal ownership of AI outputs, escalation paths when automation produces a bad result, and decision frameworks that specify when human intervention is required. “Organizations need to go a step beyond deploying AI tools and treat AI adoption as a leadership transformation,” Circus said. “Who owns this output? Who gets the call at 2 AM when the automated system makes a bad call? Until those questions have answers baked into the org structure, organizations are just redistributing risk.”

Budget exists, training does not

Nearly two-thirds of respondents said their organizations have sufficient budget to implement AI features, with smaller organizations showing the strongest confidence. More than half, across all organization sizes, described the training available for human-AI collaboration as either limited or insufficient.

“The budget isn’t the problem,” Circus said. “Organizations are buying the tools and skipping the next step: practical, role-specific enablement.” That means training that answers the questions security leaders encounter in daily operations: how to validate what an AI system is reporting, when to override it, and how to explain an AI-driven decision to a board or regulator.

Circus also pointed to the absence of structured frameworks for human-in-the-loop workflows. Most teams are improvising accountability in real time, with no defined model for when AI acts autonomously, when it recommends and a human decides, and when humans lead entirely. “That line is blurry, and that ambiguity is exactly what drives the decision fatigue and operational friction we’re seeing in the data,” Circus said.

The gap between capital investment and workforce enablement means organizations are deploying AI tools without giving practitioners the preparation needed to oversee them. Leaders are absorbing the governance burden through manual effort rather than formal training structures.

Trust requires transparency and control

Cybersecurity leaders cite consistent, measurable accuracy over time as their primary factor for trusting AI systems, with clear accountability, human override controls, and transparent explanations of how decisions are made ranking nearly as high.

Leaders show notably higher trust in their own internal teams than in third-party vendors, a gap the data ties to visibility and oversight. Eighty-seven percent expressed mostly or complete trust in internal teams to use AI responsibly, compared to 77% for cybersecurity vendors.

Circus said vendors need to close that gap by building explainability into their products directly, including audit trails, meaningful human override controls, and honest communication about where a model may fail. “When security leaders feel responsible for outcomes from a black box they can’t see inside, it kills trust and adds governance complexity,” Circus said. The standard he pointed to: every AI-driven output should come with a traceable answer to who owns it if it is wrong, and how an error gets caught before it matters. “When vendors can answer that, trust follows,” Circus concluded.

Download: Tines Voice of Security 2026 report