Speeding MTTR when a third-party cloud service is attacked

We all know you can’t stop every malicious attack. Even more troublesome is when an externally sourced element in the cloud – engaged as part of your infrastructure – is hit and it impacts customers using your digital service.

That’s what happened on October 22 when a DDoS attack on the AWS Route 53 DNS service made its S3 storage service unavailable or slow loading to thousands of organizations. We had an early view of this outage which allowed us to reverse engineer the incident. This analysis offered clues on optimal approaches to speed mean time to resolve (MTTR), minimizing the impact of cloud-based service outages on your business.

Why some S3 customers were in the dark

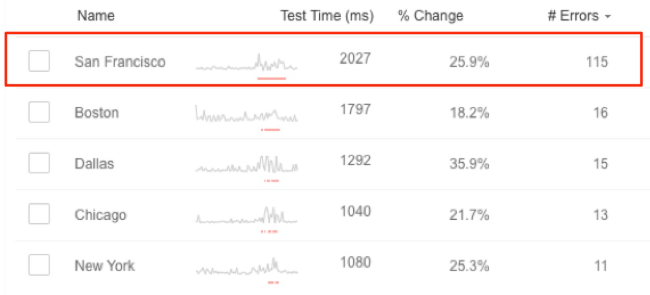

Amazon reported that the DNS outage started at 10:30am PT. However at 5:20am PT certain anomalies appeared in the west coast of the US, particularly the San Francisco and Los Angeles areas. They showed up as packet loss when a DNS query hit the AWS network. A deeper look indicated this affected only the S3 service, not AWS as a whole.

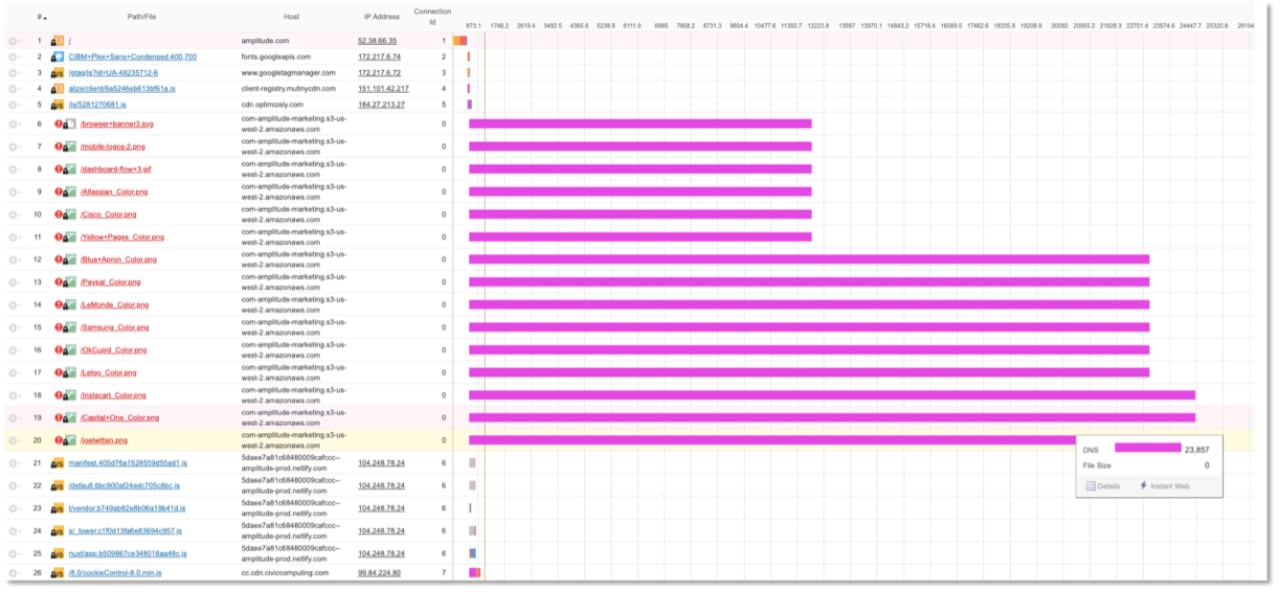

To see how this impacted one company, the waterfall chart’s magenta lines show that the Amazon Route 53 DNS servers responsible for serving S3 data to this specific website were not available. The company’s main website pages were up, but its data services dependent on S3 were down.

Eventually AWS acknowledged the DDoS attack and responded, with traceroutes indicating packets were rerouted through Neustar ASN, a DDoS mitigation service used by AWS. But this was hours after the damage to S3 customers was done.

Five guidelines to fortify for third-party outages

It is possible to mitigate the impact of attacks on third-party components upon which you depend. The key is to know what to look for. Companies that caught this incident early had the following guidelines in place:

1. Always monitor from the end-user perspective. The traditional method is to monitor your infrastructure only, which will often put you at a timing disadvantage. The first signs of this particular attack showed up as latency to west coast users. Security or IT ops teams that saw this were put on early alert that something was wrong. Watching your infrastructure and the end user provides a holistic view and helps you get to detection more quickly.

2. Monitor third-party components as carefully as your own infrastructure. You brought on cloud-based services to shift the responsibility elsewhere, right? Well, only to a point. The number of domino effect outages we’ve seen that arise from external services like storage, APIs, newsfeeds, video feeds, etc. has grown over the last few years. While your customers may understand when you report it’s a third party problem, they will still be frustrated with your service.

3. Don’t monitor a cloud-based service only from the cloud. If you had engaged a popular synthetic monitoring provider to watch your AWS Route 53-dependent services, there’s a good chance that provider’s software was hosted in the same AWS datacenter. So rather than getting a real-world view of performance, you’re getting a view from just down the hall. That would have resulted in a dashboard that showed zero latency and 100% availability for DNS, all the while your customers were suffering.

That’s why we recommend that your monitoring agents be placed in geographically distributed locations using a variety of infrastructure types, including backbone, ISP and wireless, in addition to multiple cloud providers. This increases your chances of detecting problems and also provides valuable ongoing analysis of the type of performance issues that impact your customers, and where they might be likely to occur next.

4. Decouple infrastructure where possible. Reducing dependencies between network layers and within layers can go a long way toward preventing domino-effect failures across your entire network. This is especially true for mission-critical components. The rebuilding of applications to accomplish this is far from a simple task, but will result in a more protected system overall. You’ll also find that loosely coupled infrastructure is easier to scale and more affordable since you’re increasing server capacity for select components rather than for an entire network layer.

DNS monitoring in a decoupled architecture can also help you isolate and analyze the health of individual components of your DNS system as well as locate where and why network latency and performance degradations are occurring. During the AWS attack, this type of testing helped us quickly determine the outage was contained to S3.

5. Have DNS backups in place and be ready to scale if necessary. An obvious approach that bears repeating is to have backups or workarounds in place, particularly for a service as vital as DNS. While this DNS problem only impacted S3, not the outsourced AWS DNS service many organizations use, it revealed a potential weak spot.

When the DDoS attack hit AWS, it was flooded with malicious traffic causing the customer access problems we’ve outlined. So if this AWS issue had affected Route 53 customers, your site or service would be unavailable. So have plans to temporarily scale when hit with excessive traffic until you can put the backup in place.

Conclusion

No cybersecurity plan is bulletproof but careful monitoring can be a second line of defense, especially if you fine-tune your program to catch performance failures rooted in external third parties. With proper planning you can detect attack-related problems earlier and, in many instances, get ahead of the problem.