Click, wait, repeat: Digital trust erodes one login at a time

Sign-up forms that drag on, login steps that repeat, and access requests that take longer than expected have become a normal part of using digital services. These moments rarely stand out on their own, and over time they influence how people judge the systems they rely on. The 2026 Thales Digital Trust Index reflects that environment, where trust is built or lost through everyday interactions.

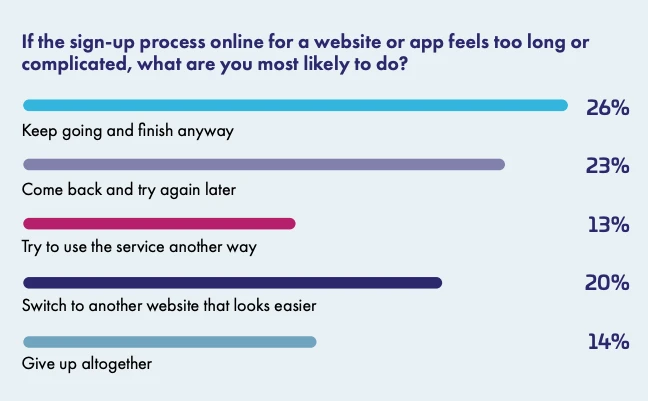

Most consumers have encountered problems when using websites or apps, with 68% reporting issues over the past year. That experience carries into onboarding, where patience starts to wear thin. Some users continue through a complicated sign-up process, while others pause or look for an easier alternative.

This does not suggest that people want less security. Many accept additional steps when those steps feel necessary. Friction becomes a problem when it feels excessive or unexplained, turning routine access into a point of hesitation.

Small disruptions shape how users feel about systems

The issues people encounter tend to be familiar. Pages load slowly, services stall, navigation becomes confusing, and small interruptions break the flow. Pop-ups appear at the wrong moment, CAPTCHA challenges interrupt progress, and forms request the same information more than once.

Over time, these interruptions influence how a service feels. When pages load quickly and remain stable, users report higher levels of trust. The same applies when navigation is simple and language is easy to follow. Words on the screen play a role in how people understand what is happening and whether they feel confident continuing.

Data sharing follows a similar pattern. People remain cautious, especially when it comes to more sensitive information. Basic details feel easier to provide, while identifiers raise more concern. When the reason for requesting information is explained, users are more willing to continue with the process.

At the same time, many people describe limited visibility into how their data is handled. Only a small portion say they understand how organizations collect and use their information. Others point to difficulty in finding or adjusting privacy settings, which contributes to a sense that responsibility sits with the user.

Familiar security signals feel reassuring, newer ones less so

Recognizable security measures continue to influence how services are perceived. MFA and passkeys are widely understood, and their presence signals that protection is in place. Policies around data use have a similar effect when they are easy to find and understand.

AI produces a more uneven response. Some people see it as useful, particularly for routine tasks, while others remain cautious about how it operates behind the scenes. Concern is still widespread, and that uncertainty shapes how AI is received when it appears in user-facing interactions.

“The 2026 Digital Trust Index shows that as AI adoption is accelerating, trust is struggling to keep pace,” said Danny de Vreeze, VP of Identity and Access Management at Thales. “When AI simply helps people work faster, confidence is high. But when AI starts acting autonomously and making decisions or interacting with systems on a user’s behalf, people begin asking harder questions about security, control, and accountability.”

Partner access issues reveal deeper operational strain

The same themes become more pronounced in partner environments, where access problems directly interrupt work. Many partner users describe delays, inconsistent permissions, and systems that do not align with their needs.

Getting access is often the first hurdle. In some cases it arrives quickly, while in others it takes several days, leaving work waiting for systems to catch up. Even after access is granted, permissions do not always match the role, which creates confusion and slows progress.

Access can disappear while it is still needed, or remain in place after it should have been removed. Both situations create their own kind of friction, one affecting productivity and the other raising concerns about exposure.

In response, users find ways to keep moving. Workarounds become part of the process, including shared credentials and repeated requests for access changes. These patterns reflect gaps between how systems are structured and how work is carried out.

There is strong demand for more visibility and control. Users want to see what access they have, adjust it when needed, and avoid unnecessary steps. When those elements are missing, even well-intentioned controls can feel like obstacles.

Perception and experience continue to diverge

A gap remains between how organizations assess trust and how users experience it. IT and security leaders view trust as established, while users describe friction and uncertainty in everyday interactions. The same difference appears around data collection, where organizations treat it as routine and users approach it with caution.

At the same time, identity-related threats remain part of the environment, which places pressure on organizations to strengthen controls while maintaining usability. Newer authentication methods continue to gain attention, and AI is moving into production environments at a fast rate. Trust in these systems, especially when they handle personal data, is still developing.

Across both consumer and partner systems, access has taken on a broader role. It is where usability, security, and transparency come together in real time. When those elements align, interactions move forward without interruption. When they do not, even simple tasks can become points of friction that influence how trust develops over time.