Global analysis of 10 million web attacks

Web applications, on average, experience twenty seven attacks per hour, or roughly one attack every two minutes, according to Imperva. They observed and categorized attacks across 30 applications as well as onion router (TOR) traffic, monitoring more than 10 million individual attacks targeted at web applications over a period of six months.

The analysis shows:

- When websites came under automated attack they received up to 25,000 attacks in one hour, or 7 attacks every second.

- Four dominant attack types comprise the vast majority of attacks targeting web applications: Directory Traversal, Cross-Site Scripting, SQL injection, and Remote File Inclusion.

Modern botnets scan and probe the Web seeking to exploit vulnerabilities and extract valuable data, conduct brute force password attacks, disseminate spam, distribute malware, and manipulate search engine results.

These botnets operate with the same comprehensiveness and efficiency used by Google spiders to index websites. As the recent Lulzsec episode highlighted, hackers can be effective in small groups.

Further, automation also means that attacks are equal opportunity offenders – they do not discriminate between well-known and unknown sites or enterprise-level and non-profit organizations.

Over 61 percent of the attacks monitored originated from bots located in the United States, although conclusions cannot be made regarding from which geography these bots are controlled.

Attacks from China made up almost 10 percent of all attack traffic, followed by attacks originating in Sweden and France.

According to the data, the amount of XSS traffic is growing:

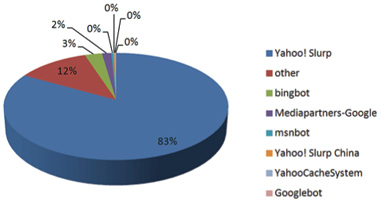

Below you can see the distribution of search engine crawlers observed following the maliciously-crafted XSS links.

According to this comparison, Yahoo is the most susceptible to SEP-related XSS, while Google apparently filters most of these links.

Technical recommendations

Automated attack detection requires collecting data, combining it and then analyzing it automatically in order to extract relevant information and apply security countermeasures. Gathering the required data requires monitoring protocol anomalies even if they are not malicious or if the web application is not vulnerable.

Combining this data with intelligence gathered on known malicious sources will help enlarge the knowledge base for identifying attacks and selecting appropriate attack mitigation tools. Here are 5 tips for the security team:

1. Deploy security solutions that deter automated attacks.

2. Detect known vulnerabilities attacks – the security organization needs to be aware of known vulnerabilities and have an up-to-date list to know what can and will be exploited by attackers.

3. Acquire intelligence on malicious sources and apply it in real time.

4. Participate in a security community and share data on attacks.

5. Detect automated attacks early – quickly identifying thousands of individual attacks as one attack allows you to prioritize your resources more efficiently and can help in the detection of previously unknown attack vectors (e.g., “zero days”) included in the attack.